Only 7% of organizations deploy AI models daily, according to the CNCF's 2025 Annual Survey.

If your engineering team could only deploy application updates once a week or once a month, you'd consider it a crisis. You'd be investigating pipeline bottlenecks, automating manual gates, and removing every friction point between code and production. Daily deployments have become the benchmark for high performing software teams.

So why are we accepting a deployment velocity for AI that we'd never tolerate for applications?

The standard explanation is that AI is different. Models are complex. Data science teams work differently than software engineers. Machine learning introduces unique challenges. All true. But the CNCF data reveals something more uncomfortable: 47% of organizations deploy AI models only "occasionally." Another 46% fall somewhere in between daily and occasionally. That's 93% treating AI deployment as an exception rather than a routine operation.

The gap comes down to delivery infrastructure. The same practices enabling rapid application delivery – CI/CD automation, GitOps workflows, robust observability – are what's missing from most organizations' approach to deploying and operating AI in production.

Here's the question: Are we willing to build AI-ready delivery systems, or will we accept that AI deployment velocity lags a decade behind everything else we ship?

The real problem: Delivery systems weren't built for this

Model serving stresses delivery systems differently than traditional applications do, and it shows up in the metrics.

DORA's 2024 analysis found that higher AI adoption correlates with lower engineering performance. Teams that increased AI usage by 25% experienced a 1.5% reduction in throughput and a 7.2% decline in system stability. Beyond being hard to deploy, AI slows down delivery pipelines and makes them less reliable. CI/CD pipelines optimized for stateless applications break under the weight of model serving.

Google Cloud's MLOps documentation identifies the core mismatch. Traditional software relies on unit tests and integration tests to provide deployment confidence. AI models require statistical validation through testing performance on holdout datasets, which is a fundamentally different validation gate that doesn't fit neatly into existing pipelines. Models frequently fail in production when data distribution shifts or environment dynamics change, requiring continuous monitoring and retraining that most delivery systems weren't designed to handle. Google's research also describes the "disconnection between ML and operations," where data scientists hand over trained models as artifacts and engineering teams struggle to deploy them without understanding model-specific requirements.

Model serving differs from application deployment in three ways:

Validation gates require statistical testing rather than functional testing. You can't simply check if the model returns a result. You need to verify it returns accurate results within acceptable latency and resource constraints.

Artifact management involves multi-gigabyte model files rather than lightweight code packages. Container registries and CI/CD systems built for kilobyte-scale artifacts struggle with models that can exceed several gigabytes, creating bottlenecks in build and deployment pipelines.

Resource orchestration demands GPU scheduling and node affinity rather than standard compute allocation. Models need to land on appropriately resourced nodes, often with specific hardware requirements that traditional orchestration platforms handle awkwardly.

These fundamental mismatches explain why most organizations are running production ML workloads on delivery systems designed for a different era.

You already know how to build robust delivery systems

The solution is applying the same infrastructure discipline to AI that you've already applied to applications.

Most organizations have proven they can build robust delivery systems. Among organizations with mature cloud-native practices, 91% have implemented CI/CD pipelines, according to the same CNCF report. 58% have implemented GitOps: Infrastructure-as-Code, automated deployment pipelines, robust rollback capabilities, and comprehensive audit trails. The most advanced organizations check in code multiple times per day (74%), run daily releases (41%), and automate most deployments (59%).

These capabilities didn't appear overnight. They required organizational commitment, infrastructure investment, and cultural transformation. You broke down silos between development and operations. You automated manual processes. You treated infrastructure as code.

The gap isn't that AI requires fundamentally different capabilities. The gap is that most organizations haven't applied the same rigor to AI deployment that they've applied to application deployment. You've built sophisticated delivery systems for stateless apps. Now you need to adapt those systems for stateful model serving: bigger artifacts, different validation gates, specialized resource requirements, but with the same foundational discipline.

What "AI-ready delivery" actually looks like

AI-ready delivery is a concrete set of capabilities that platform teams can build by adapting existing cloud-native practices.

The “boring” foundation

The CNCF report describes Kubernetes as "boring" and means it as the highest praise. Boring means reliable, predictable, mature, stable and aligned with industry standards. The infrastructure that makes AI deployment reliable is the same boring infrastructure that makes application deployment reliable. 66% of organizations already run generative AI workloads on Kubernetes, proving you don't need specialized ML platforms; you just need battle-tested orchestration with the right adaptations.

Platform engineering as the bridge

Platform engineering bridges the gap between data science experimentation and production-grade deployment. Research from Google Cloud and Enterprise Strategy Group shows that 55% of organizations have already adopted platform engineering, with 90% of those planning to expand it to more developers. Platform engineering has become a mainstream approach for scaling technology capabilities, including AI.

As Platform Engineering explains in their framework for AI and platform engineering, there's a critical distinction between "AI platform engineering" (using AI to power internal developer platforms) and "platforms for AI" (infrastructure purpose-built for ML workloads). The latter category enables deployment velocity.

According to Platform Engineering, "these [platforms for AI] are equipped with high-performance hardware like GPUs, TPUs, and NPUs, and they dynamically handle intensive training and inference processes." They provide the foundational capabilities like resource scheduling, artifact management, and deployment automation that data science teams need but shouldn't have to build themselves.

This transformation is already underway: 75% of platform engineering teams are either hosting or preparing to host AI workloads, according to the State of AI in Platform Engineering report. Platform teams are moving from AI users to AI enablers.

The infrastructure layer

The infrastructure layer handles the mechanics of getting models from code to production. This is where most organizations can adapt existing capabilities from application delivery, but only if they recognize that adaptation is necessary.

An AI-ready delivery system includes:

- CI/CD pipelines that treat models like code artifacts

- GitOps workflows for model deployment

- Container orchestration with GPU scheduling capabilities – Kubernetes provides this, but requires configuration for GPU resources

- Observability that includes model-level metrics (drift, accuracy, inference latency), not just infrastructure health

The operational layer

Beyond infrastructure, the operational layer addresses what happens after deployment: validation, monitoring, and safe rollout strategies. This is where AI's statistical nature creates the most meaningful departure from application deployments. Traditional health checks don't work; you need to verify model behavior, not just process availability.

At the operational level, AI-ready delivery requires:

- Automated validation gates adapted for statistical testing beyond functional checks

- Model performance monitoring that tracks accuracy, latency, and drift alongside infrastructure metrics, with automated circuit breakers that disable models exceeding defined guardrails

- Canary and blue-green deployment capabilities for model versions, allowing teams to test new models on a subset of traffic before full rollout, with clear rollback mechanisms if performance degrades

The ROI

The return on infrastructure investment is measurable. McKinsey profiled a large bank in Brazil that reduced ML time-to-impact from 20 weeks to 14 weeks – a 30% reduction – by adopting MLOps and data engineering best practices.

The payoff shows up in financial performance. The 2024 DORA Report found that elite performers, defined as those deploying multiple times per day with sub-hour recovery times, are twice as likely to exceed their profitability targets.

Google Cloud and Enterprise Strategy Group research documented similar patterns: 71% of leading platform engineering adopters reported significantly accelerated time to market, compared to just 28% of less mature adopters. Beyond velocity, mature platforms unlock innovation capacity. Organizations with co-managed platforms allocate 47% of their developers' time to innovation and experimentation, versus 38% for those managing platforms entirely with internal staff.

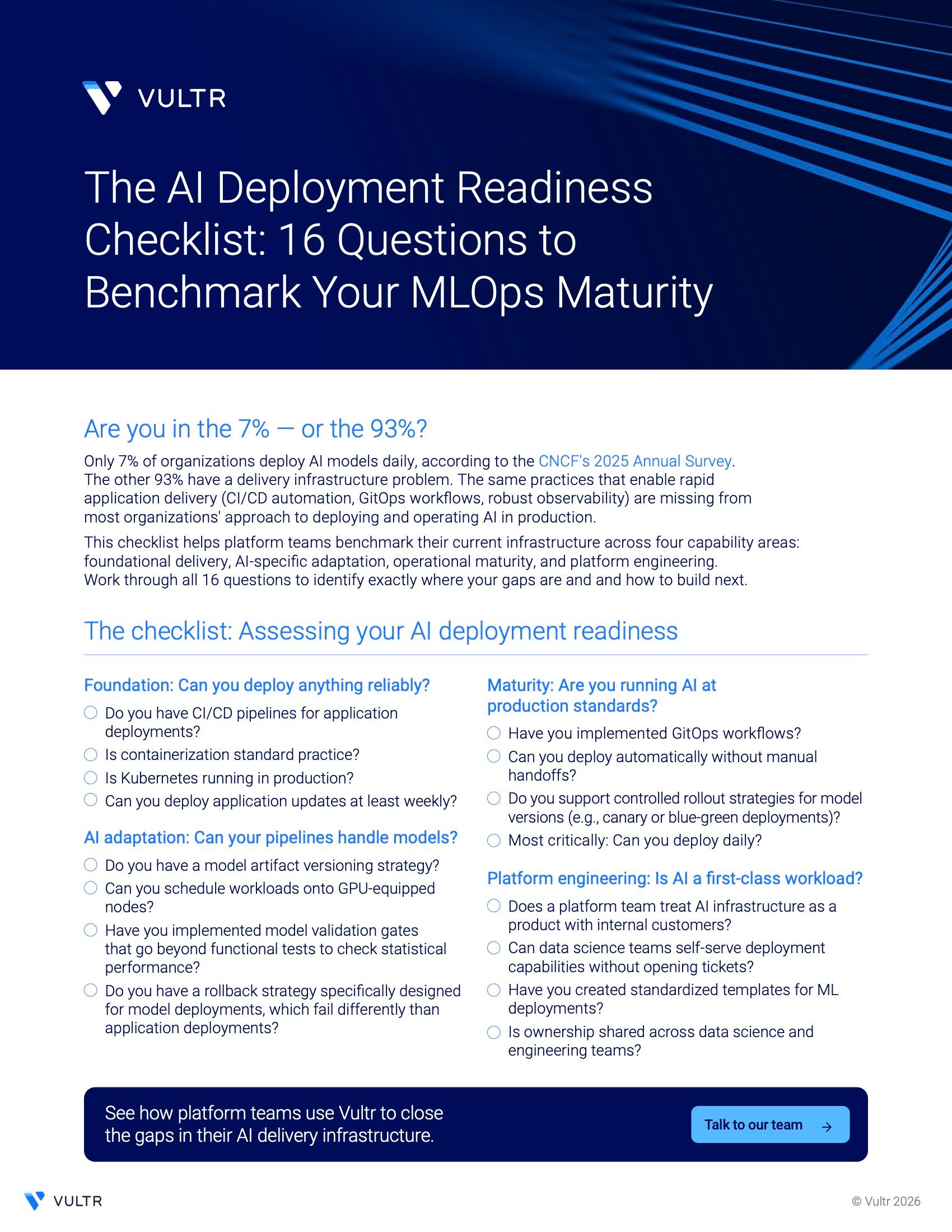

The checklist: Assessing your AI deployment readiness

Platform teams need a practical self-assessment. Use this diagnostic framework to identify where your delivery infrastructure stands.

If you checked fewer than eight boxes across these four categories, you're likely in the 93% who haven’t reached AI deployment velocity. Each unchecked box represents a concrete improvement opportunity.

Building for deployment velocity

The 7% deploying AI daily share a common foundation: mature delivery infrastructure.

The CNCF data identifies "cultural changes with development teams" as the top challenge in cloud-native adoption, cited by 47% of organizations. The same organizational transformation that enabled DevOps must now happen for MLOps. But here's what makes this moment different: Platform teams can start building AI-ready delivery systems today without waiting for enterprise AI strategy to solidify.

The gap between organizations deploying AI daily and those deploying occasionally is widening. Organizations building reliable deployment infrastructure now establish operational advantages that compound over time. Each deployment teaches the system something new. Each failure caught early prevents a production incident. Each automated workflow reduces the cognitive load on the next team.

This is infrastructure work. Start with the checklist. Identify your biggest gap. For most organizations, it's GitOps workflows and deployment automation. Then build the boring, reliable systems that let you deploy any model, from any team, at velocity.

Download the AI Deployment Readiness Checklist to assess your organization's MLOps maturity and identify concrete next steps for improving AI deployment velocity.